Corrupted Economic Research—two illustrations

Download the WEA commentaries issue ›

By (i) Norbert Häring and (ii) Edward Fullbrook

(i) Corrupted economic research, academic gatekeepers and the media: The case of tuition fees

By Norbert Häring, Frankfurt

The stakes are high. The funding for British Universities (and the pay of vice-chancellors) has improved tremendously after tuition fees were introduced in 1998 at 1000 GBP for the wealthier students and raised to 9250 GBP for all students today. This funding out of the pockets of students seems endangered, as evidenced by a speech of Prime Minister Theresa May on February 22 in which she caved in to strong political pressure and announced a thorough review of the system of university funding. One of the main topics of the review will be how to ensure that tertiary education is accessible to everyone from every background.

Quite conveniently for the universities, three educational economists, in September 2017, published a piece in the prestigious Working Paper series of the National Bureau of Economic Research titled: “The End of Free College in England: Implications for Quality, Enrolments, and Equity”(Note: link goes to a revised version). In this piece they purport to show that “tuition fees, at least in the English case supported their goals of increasing quality, quantity, and equity in higher education”. (emphasis added)

For the European readership they published the same paper as a discussion paper of the Center for Economic Performance at the London School of Economics and Political Sciences, which is funded by the Economic and Social Research Council, and summarised their finding on 21 October on the platform Vox (voxeu.org) of the London-based Centre for Economic Policy Research under the title: “The real costs of free University: Lessons from the UK”. Vox reports 12,500 reads for this piece.

A surprising finding

In their paper, Richard Murphy (U. of Texas, Austin), Judith Scott-Clayton (Columbia) and Gillian Wyness (University College London) claim to have found that the British system of tuition fees has improved enrolment numbers for young people from disadvantaged households – both in absolute terms and relative to those from wealthier households. Authors and involved institutions made sure that the media took note of these findings, especially the most surprising one about the narrowing of the enrolment gap between rich and poor students after steep fees were introduced. Daily Mail, Bloomberg, Forbes and others reported prominently about this. The headlines and bullet-points read:

“England Ended Free College — Which Was Great For Students” (Forbes)

“Free College Would Help the Rich More Than the Poor. Bernie Sanders’s idea sounds great, but there are better ways to aid students who can’t afford tuition” (Bloomberg)

“Twice as many poor students attend universities since fees were brought in: Fifth of those from homes in the lowest income bracket now study. Biggest growth in numbers has been among those from lowest income families. Study casts doubt on claims that fees discourage poorer pupils from university” (Daily Mail)

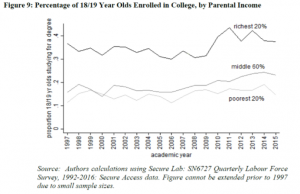

These statements, which are all based on the reported research results of the trio, have turned out to be plain wrong. The authors claim that the enrolment-rate of the most disadvantaged households rose the most from 1997 to 2015 and that it doubled in that period to 20 percent. The corresponding graph of the data, however, shows only a small increase from about 11 to about 13 percent in the bottom quintile. It can be seen, that the increase in enrolment of the middle 60 percent of the distribution is significantly more pronounced.

I pointed out the discrepancy to the authors. One of them, Wyness, admitted the mistake and (wrongly) claimed that the numbers referred to a different graph, which had inadvertently been left out. She pointed me to a weblog article called “Up, up and away: the era of high tuition fees” from 7 December, in which she supports the claim with more evidence. (If you follow the link, don’t be surprised about the author you might see there. It will be explained later.) Wyness also declared upon request that there was no need to correct the factual mistake in the other publications, as it was only preliminary research. She apparently did not consider that the extended media reporting of her false preliminary research would make a correction necessary.

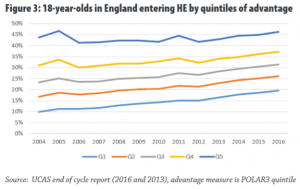

On inspection, it turned out that Wyness’ description of the additional evidence provided in the post on the specialized weblog WonkHE is again highly misleading. She presents a graph of enrolment rates over time by regions, which are divided into quintiles according to regional enrolment rates. Text and headline, however, falsely represent the data as pertaining to quintiles of households. Only if you know what “POLAR3” means, or look it up in the source report, will you find out that you have been misled. The increase of enrolment from 10 to 20 percent is there to see in the graph, but it refers to a different time-frame and to regions instead of households. Thus, the claim of the faulty NBER paper that the enrolment-rate of the most disadvantaged households rose the doubled from 1997 to 2015 to 20 percent cannot possibly refer to this graph.

Since this started to appear like intentional misleading of readers and the media, I asked CEPR-director Richard Baldwin, editor-in-chief of Vox, and James Poterba, president of NBER on 23 January, about their policies regarding correction or retraction of erroneous or fraudulent preliminary research published on their publication channels. I explained the problems with the tuition paper. Baldwin reacted with a request for further explanation and then did not answer further requests for comment. Poterba reacted swiftly. He alerted the authors to the possibility of posting revised working paper versions in the NBER series, which they soon did. The misleading statements on Vox, in contrast, have not yet been corrected or retracted, nor has the Wyness blogpost on WonkHE.

The revised paper was faulty again

The revised NBER Working Paper was, however, misleading in the relevant section again. The authors corrected the false description of the time-series on students by income. They proceeded by saying that this data was not very reliable. They added the graph from the Wyness-blog-post depicting the data by regions. As Wyness had done in the blogpost, they presented the regional data as if it was household data. Only in a footnote they explained that it was not really household data (admitting, by implication, that the household-terminology used in the main text was wrong and misleading).

I alerted James Poterba to this discrepancy and to the fact that the unsubstantiated claim that equity had improved was still in the abstract of the paper, despite not being backed up by anything in the paper any more. He had the authors provide a second revision, which is the one, which is currently (2 March) on-line. In this revised version, authors clearly state that the additional data was by region only. They do not even attempt to explicitly argue that narrowing enrolment differences by region would imply narrowing differences by parental income: Still, the abstract with the claim of improved equity remained unchanged:

“We conclude that tuition fees, at least in the English case supported their goals of increasing quality, quantity, and equity in higher education.”

A prequel

The NBER Working Paper was preceded by a working paper published by the Brookings Institution in the US in April 2017. Notably, this paper, which was based on the same data and graphs in the relevant section, had a much more modest claim regarding equity. Above the same graph with the enrolment rates of poor and more affluent students it asked: “Have socioeconomic gaps in enrollment declined after the 1998 reforms?” and answered: “They have at least stabilized.”

There does not seem to have been much media interest in that moderate and seemingly correct finding. It seems hard to interpret the change in data description from fairly correct to plain wrong as an innocent error. The reasoning given by Wyness is implausible. The ostensibly forgotten graph that she produced in her blog-entry in December does not at all lend itself to the description given in the NBER working paper. Rather it seems to have been constructed later, with a time-span chosen to yield the doubling of enrolment to 20 percent, in order to give those who had noticed the error in the NBER and CEP working paper an innocent explanation for the “error”.

Even further back in 2011, Wyness was co-author of a study funded by the Institute of Fiscal studies, on “The Impact of Tuition Fees and Support on University Participation in the UK”. In this study, she and her co-authors Lorraine Dearden and Emla Fitzsimons concluded “that tuition fees have had a negative effect on participation, with a £1,000 increase in fees resulting in a decrease in participation of 3.9 percentage points.” This inconvenient older result of Wyness is not mentioned in the NBER Working Paper or her other current publications with the opposite result. The 2011 working paper led to a journal publication in 2014, which conveniently did not include the tuition aspect and focused only on the cost-of-living support. This 2014 paper also does not mention the 2011 paper.

Unwillingness to correct a convenient finding

The authors had to be pushed to correct the blatant and admitted error in the NBER Working Paper by NBER-president James Poterba, who had reacted swiftly in both cases after he was notified of the mistakes. He did not go beyond having the most blatant mistakes corrected. Despite the suspicious circumstances, he did not seem to make any effort to check that the central claims of the paper contained in the abstract were still valid after the revision. After being alerted to the problem, he did not react. Thus, even readers looking at the abstract of the revised version of the paper will go away with the distorted impression that there is evidence that the enrolment gap between poor students and more affluent-students has narrowed after the introduction of high tuition fees. The same is true for Center of Economic Performance. Since I came across the CEP discussion paper later, CEP Director Stephen Machin was only alerted on 26 February to the issue. On 27 February he informed me, that the authors had now revised the Discussion Paper. This was more than a month after Wyness had admitted to the error. As in the case of the revised NBER Working Paper, the abstract remained unchanged, even though authors do not claim any improvement in equity any more in the relevant section of the paper.

This is not a satisfactory outcome of a correction exercise, though NBER’s and CEP’s are the best performances in this respect.

The authors did not revise any publication whose gatekeepers did not push them to do so. The Vox-article remains unrevised, even though editor Baldwin had been alerted. The same is true for Wyness’ blog-piece on WonkHE.

On 25 January 2018 Wyness presented her group’s findings at the Economic and Social Research Institute (ESRI) in Dublin. According to the ESRI-report on the event and the slides presented, she seems to not have seen a need to correct the misleading wording in past publications on their joint research.

The media did not seem to care at all about correcting the strong and wrong claims about the impact of tuition fees, which they had widely distributed.

Economics-blogger Noah Smith, author of the piece on Bloomberg, and a responsible editor of Bloomberg, did not respond after being alerted by E-mail on 22 February that the relevant sections of the NBER paper have been revised. To my knowledge, Smiths reporting on Bloomberg has not been corrected.

Author Eleanore Harding of Daily Mail was also alerted on 22 February. She did not respond. I was not able to locate any correction by Daily Mail.

Forbes (corrections@forbes.com) was alerted on February 26 to the revisions of the research, on which the magazine had reported. There was no reaction or correction so far, to my knowledge.

Summary and assessment of the success of this academic disinformation campaign

The authors stuck an easily detectable spectacular and surprising claim into a working paper, which they had published in a correct and more moderate version before. They presumably alerted the media to the strong finding. Few seemed to notice the mistake or care. For those who did notice, an innocent error was admitted and they were referred to a weblog piece with misleading information, which ostensibly better founded the claim. The conveniently mistaken statement was reported widely in the context of the heated discussion about tuition fees. After the error was exposed, authors first did not want to correct it. Of the gate keepers of the scientific distribution channels, only NBER and CEP cared somewhat to have the false claim corrected and were satisfied with having that done rather unobtrusively and partially. Hardly anybody will have noticed the revision, since the abstract remained unchanged and the reason and content of the revision was not specified. CEPR’s Vox did not care about a correction of the errors, which were pointed out to them. None of the media outlets, which had reported the false conclusions, and were alerted to the revision, replied or (to my knowledge) corrected the misleading reports, with which they had influenced the public debate. The wrong claim in the paper can still be classified as a great public relations success for tuition supporters and universities, despite having been exposed as fake and partly revised. It might, however, put the reputation of the authors and some of the academic gatekeepers in danger.

Norbert Häring is a financial journalist, book author and blogger.

(ii) Bribe Offers for Academics

By Edward Fullbrook

Norbert Häring’s story about misleading academic research reminds me of another story.

Big-money offering bribes to academics is, I suspect, more common than people, including academics, realize. I first encountered the practice when I was an undergraduate. My university’s most popular course, “Insurance”, was taught by an economics professor whose students affectionately called Doc Elliot. He taught not only how the insurance industry purported to work, but also how it really worked, and he frequently accepted off-campus speaking engagements.

Doc Elliot may be the only person who has ever lived who could talk insurance and make people laugh. Certainly, he was the funniest person I’d ever known; and, despite our 35-year age gap, we became friends of a sort. One day I was sitting with him in his office when, handing me a business letter, he said, “Here, this is what a bribe offer looks like.”

The letter was from a national association of insurance companies. It praised his eminence as a world authority on insurance and said they would like to be able to occasionally call on him for advice. For this they would pay him $80,000 a year. At the time the university’s highest professor’s salary was $10,000, and so far as I know there was no money in this professor’s family.

“In the world we live in,” explained Doc Elliot, “refraining from telling the truth is often worth lots more than telling it. I get between-the-lines offers like this all the time. But I think this one deserves to go up on my bulletin board.”

EDITOR’S NOTE

Do you have any examples of compromised research or distorted presentation of research findings? Have you been pressured to frame your research to suit an agenda? We would be interested to hear. Either comment on this article or send me an email.

From: pp.13-16 of WEA Commentaries 8(1), February 2018

https://www.worldeconomicsassociation.org/files/Issue8-1.pdf

This is a phenomenally good article, more important and better-written than the ordinary ones, which typically boost the authors’ careers while diminishing the science of economics. I value honesty in economics, especially because it’s so rare. However, in the field of economics, what’s valued is what’s normal, which is dishonesty, and what’s devalued is what’s abnormal and rare, which is honesty. Economics, therefore, disproves (i.e., exactly and extremely violates) a basic law of economic theory: the law of supply and demand. Or: apparently, there’s a far higher demand for dishonest economists than for honest ones. Now, why could that be? And: what does that say about the ‘science’ of economics?